|

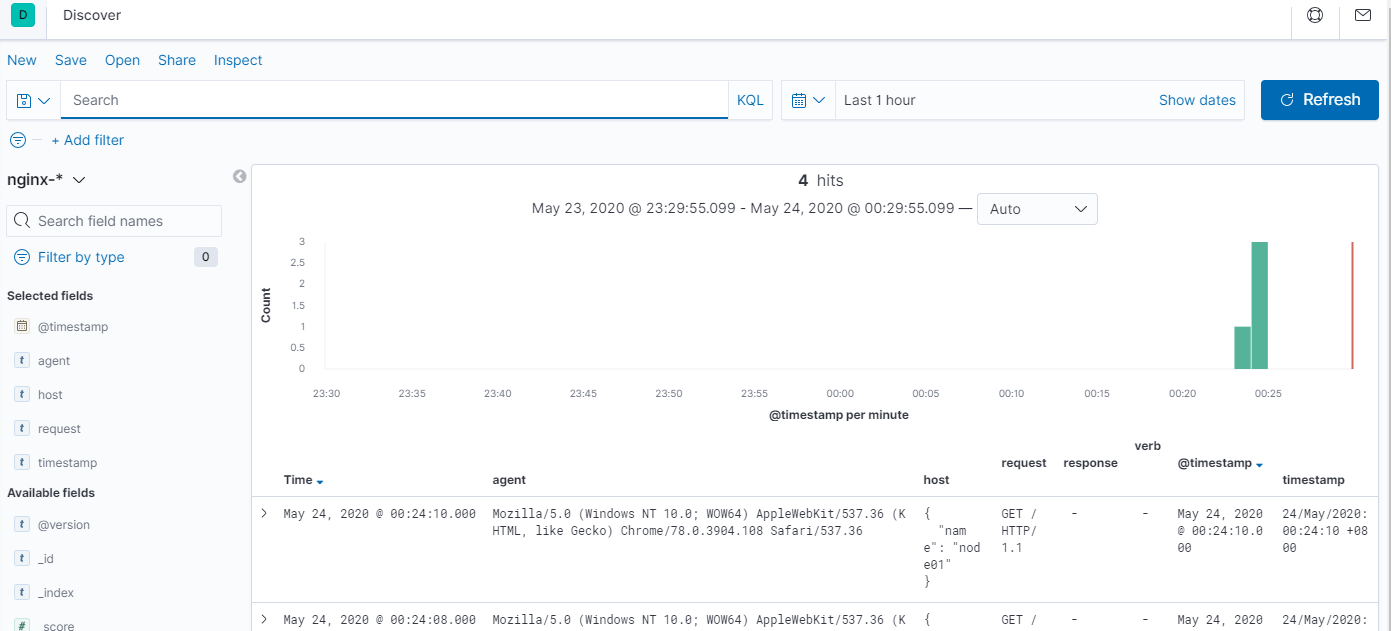

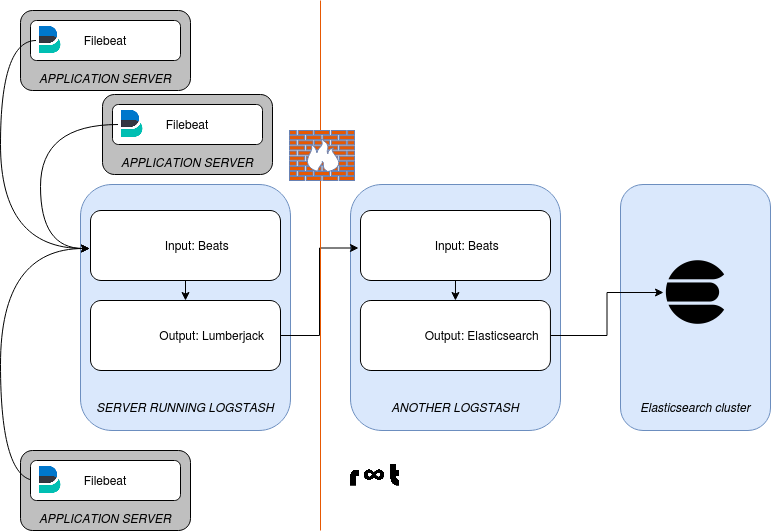

Match=> ĭatabase => "/opt/logstash/vendor/geoip/GeoLite2-City. Output section of the configuration for each configuration:.Logs being sent to pipeline "cowrie-firewall*" on port 5055 are visible in the index of pipeline "cowrie-logstash-*" (pipeline.id: cowrie_firewall_ingest).Logs being sent to pipeline "cowrie- " on port 5045 are visible in the index of pipeline "cowrie-logstash-" (pipeline.id: honeypot_ingest).Logstash is running on a single host with different pipelines for each ingest.They are configured to send logs to Logstash on different ports Both filebeat instances have their unique configurations.Only after that, the worker is capable of processing another portion of messages. Filebeat unzipped and made persistent via /etc/rc.local (running configuration: /home/user/filebeat2/filebeat.yml) & referred to as cowrie-firewall-* The parsing of a batch is complete when Logstash gets acknowledgment from all outputs about successful transmission.Filebeat installed via APT (running configuration: /etc/filebeat/filebeat.yml) & referred to as cowrie-*.

Both have different installation and configuration mechanisms. There are two different instances of Filebeat running on a single host. OS version: Ubuntu 20.04 LTS (5.4.0-1047-raspi #52-Ubuntu SMP PREEMPT Wed Nov 24 08:16: aarch64 aarch64 aarch64 GNU/Linux)ĭescription of the problem including expected versus actual behavior:Įxpected: Input data should go its respective indexĮrror: Data from one pipeline which has a specific index is also going to another index. OpenJDK 64-Bit Server VM Temurin-11.0.13+8 (build 11.0.13+8, mixed mode) If you want use a Logstash pipeline instead of ingest node to parse the data, seethe filter and output settings in the. How was the Logstash Plugin installed: Default plugin.If you use ingest pipelines with OpenSearch, consider using the 7.10.2 versions of Beats. How is Logstash being run: As a service using systemd The Logstash output plugin is compatible with OpenSearch and.For now Im sending filebeat outputs to logstash, and make logstash do some filtering and passing the log the remote server (this is done using logstash http output plugin). Logstash version: 7.16.0 (running on Raspberry Pi 4 Model B Rev 1.1 with AArch64 using Ubuntu 20.04 LTS) I have a filebeat instance reading a log file, and there is a remote http server that needs to receive the log outputs via rest api calls.file takes its input from the open source version of Filebeat (Filebeat OSS). Since ELK 7.x, Filebeat can ingest data directly into Elasticsearch.

I understand that when enabling the modules it is not necessary to include the path of the logs in the inputs of filebeat.ymlīut if I am using a different module (system, mysql, postgres, apache, nginx, etc.) to send records to logstash using filebeat: how do I insert custom fields or tags in the same way I would in filebeat.yml when I configure? The entries in the path of the records? Since each module handles its own configuration by default where it indicates that it even indicates the path of the log files.įor this, I need to somehow conditionally detect the registry (apache, system, mysql, access.log, error.log, ip / hostname, application) that I am accessing to insert custom fields that I can use to filter later in kibana.Please include the following information: OpenSearch Service supports the logstash-output-opensearch output plugin. I made sure to update the logstash configuration for wazuh and the elasticsearch. In the case of accessing the application server in glassfish, it created an input that includes the configuration: path, fields, tags from /etc/filebeat/filebeat.yml and it works. How do I make according to what type of log or log source I want to add or custom fields or tags as metadata to then filter with kibana? Considering that each module handles a path configuration to the log files. I am using different filebeat modules to send the logs. filebeat.inputs: - type: log paths: - /var/log/number.log enabled: true output.logstash: hosts: 'localhost:5044' And that’s it for Filebeat. So, Let’s edit our filebeat.yml file to extract data and output it to our Logstash instance. output.logstash: hosts: 127.0.0.1:5044 By using the above command we can configure the filebeat with logstash output. I am using the latest version of ELK Stack and I have Filebeat installed on different servers. Now, we need a way to extract the data from the log file we generate.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed